Hello blog, its been a long time. Since I finished the GA4 book I have had a good break and lots of life events have happened such as a new job, philosophies and family arrangements, but I have always intended to pick this thread up again once I had an idea on where it would best lead.

As old readers may remember, I’ve always tried to work on the meta-horizons of where I am, restlessly looking for the next exciting lesson, and that impulse has led me to Large Language Models (LLMs) sparked off by the Chat-GPT revolution, but foreshadowed by the image generation models such as Stable Diffusion a few months before.

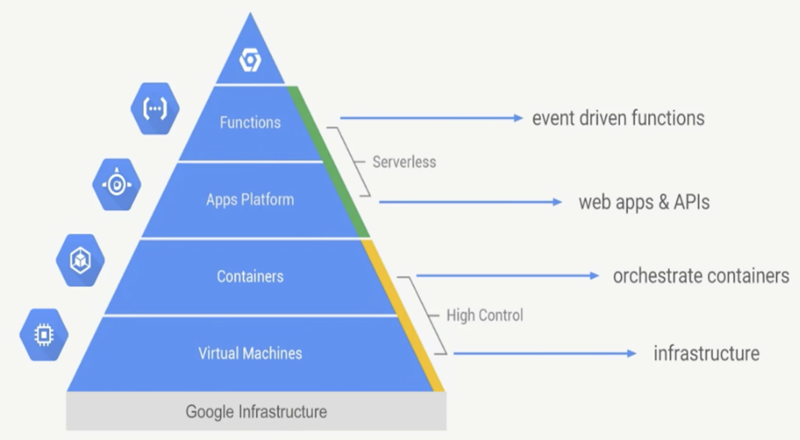

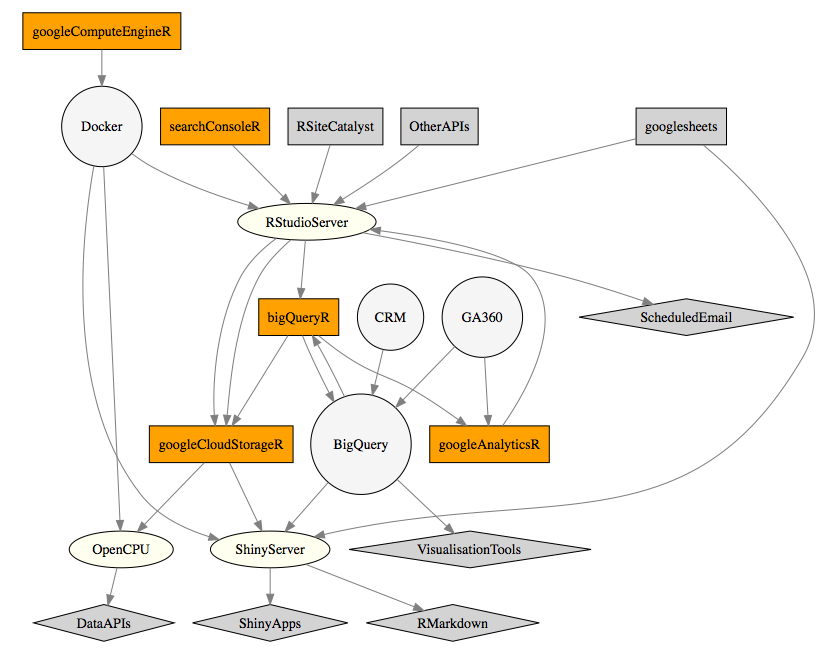

A key facilitator has been Harrison Chase’s Langchain, an active hive of open-source goodness. It has allowed me to learn and imagine and digest this new active field of LLMops (Large Language Model Operations), that is the data engineering to make LLMs actually useful on a day to day basis. I took it upon myself to see how I could apply my Google Cloud Platform (GCP) data engineering background to these new toys Langchain has helped provide.

This means I now have this new brain, Edmonbrain, that I converse with daily in Google Chat, Slack and Discord. I have fed it in interesting URLs, Git repos and Whitepapers so I can build up a unique bot of my very own. I fed it my own book, and can ask it questions about it, for example: