Scheduled R scripts via Cloud Scheduler

2022-03-26

Source:vignettes/cloudscheduler.Rmd

cloudscheduler.RmdCloud Scheduler is

a scheduler service in the Google Cloud that uses cron like syntax to

schedule tasks. It can trigger HTTP or Pub/Sub jobs via

cr_schedule()

googleCloudRunner uses Cloud Scheduler to help schedule

Cloud Builds but Cloud Scheduler can schedule HTTP requests to any

endpoint:

cr_scheduler(name = "my-webhook", "14 5 * * *",

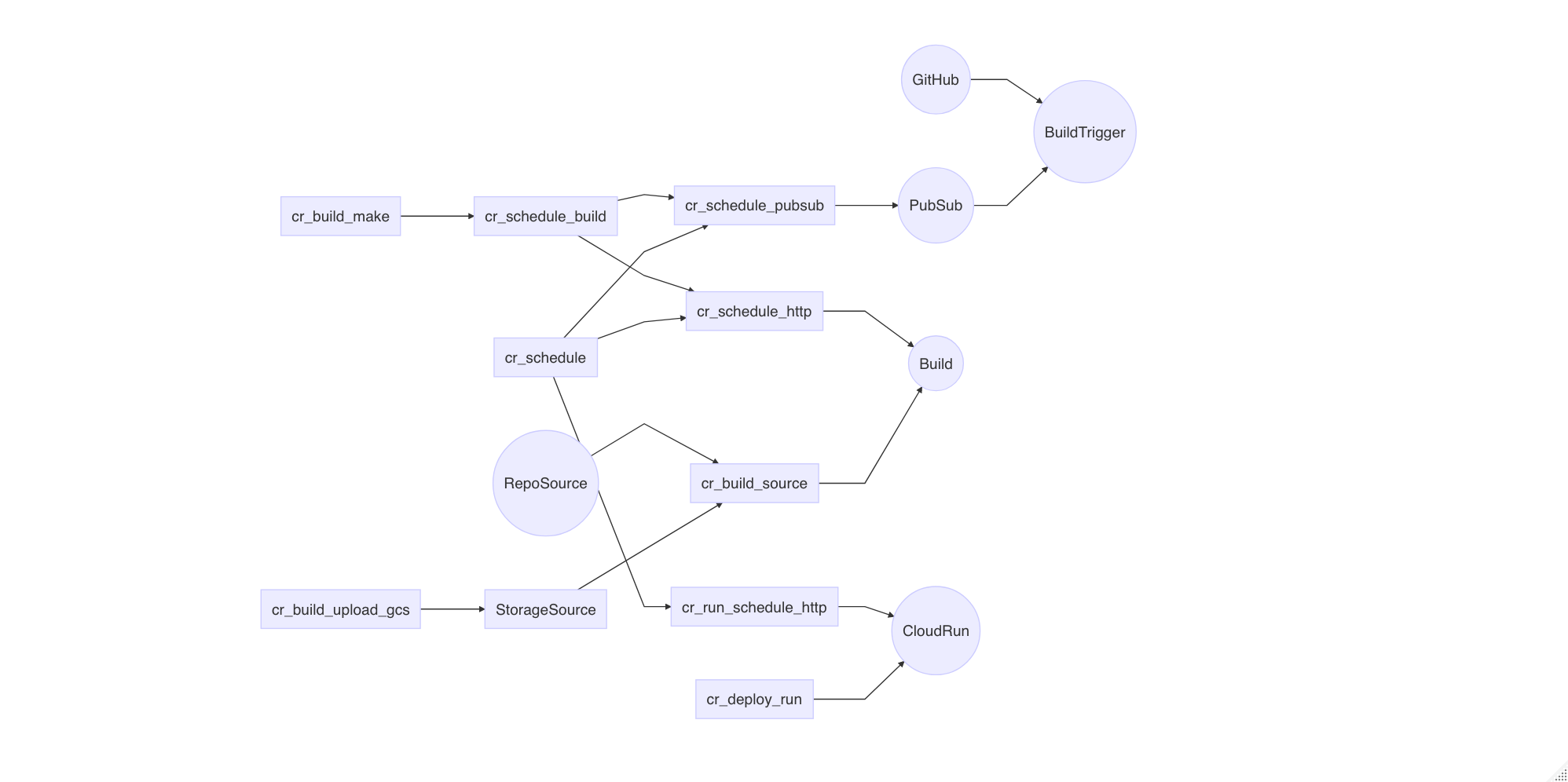

httpTarget = HttpTarget(httpMethod="GET", uri = "https://mywebhook.com"))How scheduling works with various functions in

googleCloudRunner is shown in the below plot for an

overview:

Schedule Cloud Build

Since Cloud Build can run any code in a container, scheduling them becomes a powerful way to setup batched data flows.

A demo below shows how to set up a Cloud Build on a schedule from R:

build1 <- cr_build_make("cloudbuild.yaml")

cr_schedule("15 5 * * *", name="cloud-build-test1",

httpTarget = cr_schedule_http(build1))We use cr_build_make() and

cr_schedule_http() to create the Cloud Build API request,

and then send that to the Cloud Scheduler API via its

httpTarget parameter.

Update a schedule by specifying the same name and the

overwrite=TRUE flag. You need then need to supply what you

want to change, everything else will remain as previously

configured.

cr_schedule("my-webhook", "12 6 * * *", overwrite=TRUE)Schedule Builds Triggers using PubSub

cr_schedule_http() works by creating an API call that

will trigger a Cloud Build from the Cloud Scheduler service, but this

can be harder to set-up from an authentication standpoint and also give

unhelpful errors that are hard to debug.

For more robust and transparent scheduling of builds it is recommend you use PubSub to trigger builds via a build trigger that has been set-up to respond to Pub/Sub messages. This holds additional advantages such as being able to accept PubSub messages from other sources to trigger your builds, and being able to parametrise your builds using the content within the PubSub message data.

The general strategy is:

- Create and test your build locally using

cr_build() - Create a PubSub topic either in the Google Cloud console or using

library(googlePubsubR) - Create a build trigger for your build using

cr_buildtrigger()with a topic set usingcr_buildtrigger_pubsub() - Schedule pushes to the PubSub topic using

cr_schedule_pubsub(). You can choose to set up schedules with parameters that are passed into the Builds.

An example is given below:

cloudbuild <- system.file("cloudbuild/cloudbuild.yml",

package = "googleCloudRunner")

bb <- cr_build_make(cloudbuild)

# create a pubsub topic either in Google Console webUI or library(googlePubSubR)

library(googlePubsubR)

pubsub_auth()

topics_create("test-topic")

# create build trigger that will watch for messages to your created topic

pubsub_trigger <- cr_buildtrigger_pubsub("test-topic")

# create the build trigger with in-line build

cr_buildtrigger(bb, name = "pubsub-triggered", trigger = pubsub_trigger)

# create scheduler that calls the pub/sub topic

cr_schedule("cloud-build-pubsub",

"15 5 * * *",

pubsubTarget = cr_schedule_pubsub("test-topic"))Builds can be also parametrised to respond to parameters within your

PubSub topic. The cloudbuild below echo back the value sent in

var1 of the PubSub message, and the scheduler is set-up to

send in parameters.

cloudbuild <- system.file("cloudbuild/cloudbuild_substitutions.yml",

package = "googleCloudRunner")

the_build <- cr_build_make(cloudbuild)

# var1 is sent via Pubsub to the buildtrigger

message <- list(var1 = "hello mum")

send_me <- googlePubsubR::msg_encode(jsonlite::toJSON(message))

# create build trigger that will work from pub/subscription

pubsub_trigger <- cr_buildtrigger_pubsub("test-topic")

cr_buildtrigger(the_build, name = "pubsub-triggered-subs", trigger = pubsub_trigger)

# create scheduler that calls the pub/sub topic with a parameter

cr_schedule("cloud-build-pubsub-params",

"15 5 * * *",

pubsubTarget = cr_schedule_pubsub("test-topic",

data = send_me))This opens up a lot of possibilities of when and where your code can run in reaction to both events (git commits, files hitting cloud storage, generic events on GCP) and on a schedule.

Schedule Cloud Run applications

Public HTTP endpoints

Via Cloud Scheduler you can set up a scheduled hit of your HTTP

endpoints, via GET, POST or any other methods you have coded into your

app. cr_run_schedule_http() will help you create the HTTP

endpoint for you to pass to cr_schedule():

run_me <- cr_run_schedule_http(

"https://example-ewjogewawq-ew.a.run.app/echo?msg=blah",

http_method = "GET"

)

cr_schedule("cloud-run-scheduled", schedule = "4 16 * * *", httpTarget = run_me)Private HTTP endpoints

When you create an app via

cr_deploy_run("my-app", allowUnauthenticated = FALSE) a new

service account will be created with the rights called “my-app-invoker”.

Use that email to tell the scheduler how to call the app:

# for authenticated Cloud Run apps - create with allowUnauthenticated=FALSE

cr_deploy_run("my-app", allowUnauthenticated = FALSE)

# deploying via R will help create a service email called my-app-invoker

cr_run_email("my-app")

#> "my-app-invoker@your-project.iam.gserviceaccount.com"

# schedule the endpoint

my_app <- cr_run_get("my-app")

endpoint <- paste0(my_app$status$url, "/fetch_stuff")

app_sched <- cr_run_schedule_http(endpoint,

http_method = "GET",

email = cr_run_email("my-app"))

cr_schedule("my-app-scheduled-1",

schedule = "16 4 * * *",

httpTarget = app_sched)Schedule an R script

A common use case is scheduling an R script. This is provided by

cr_deploy_r()

A minimal example is:

# create an r script that will echo the time

the_build <- cr_build_yaml(cr_buildstep_r("cat(Sys.time())"))

# construct a Cloud Build API call to call that build

build_call <- cr_schedule_http(the_build)

# schedule the API call for every minute

cr_schedule("test1", "* * * * *", httpTarget = build_call)

# you should return a scheduler object

test_schedule <- cr_schedule_get("test1")

# once finished, delete the schedule

cr_schedule_delete("test1")After it triggers you should see a “SUCCESS” in the Cloud Scheduler console and associated builds in the Cloud Build web UI.

The above assumes you have followed the recommended authentication

setup using cr_setup() and cr_setup_test() all

work.

In particular you can check the email that the API call will run

under on Cloud Scheduler in

test_schedule$httpTarget$oauthToken$serviceAccountEmail

A more complicated R script example

This example shows running R scripts across a source such as GitHub

or Cloud Respositories. This is used for builds such as package checks

and website builds. This uses the helper deployment function,

cr_deploy_r() which is also available as an RStudio

gadget.

# this can be an R filepath or lines of R read in from a script

r_lines <- c("list.files()",

"library(dplyr)",

"mtcars %>% select(mpg)",

"sessionInfo()")

# example code runs against a source that is a mirrored GitHub repo

source <- cr_build_source(RepoSource("googleCloudStorageR",

branchName = "master"))

# check the script runs ok

cr_deploy_r(r_lines, source = source)

# schedule the script once its working

cr_deploy_r(r_lines, schedule = "15 21 * * *", source = source)Supplying your own Docker image

The examples above are all using the default of

rocker/r-base for the R environment. If you have package

dependencies for your script you would need to install them within the

script.

An alternative is to customise the Docker image so it includes the R

packages you need. For instance, rocker/tidyverse would

load the Tidyverse packages.

You may also want to customise the R docker image further - in this case you can build your docker image first with your R libraries installed, then specify that image in your R deployment.

Once you have your R Docker file, supply it to

cr_deploy_r() via its r_image argument.

cr_deploy_docker("my_folder_with_dockerfile",

image_name = "gcr.io/my-project/my-image",

tag = "dev")

cr_deploy_r(r_lines,

schedule = "15 21 * * *",

source = source,

r_image = "gcr.io/my-project/my-image:dev")The logs of the scheduled scripts are in the history section of Cloud Build - each scheduled run is creating a new Cloud Build.

RStudio Gadget - schedule R scripts

If you are using RStudio, installing the library will enable an RStudio Addin that can be called after you have setup the library as per the setup page.

It includes a Shiny gadget that you can call via the Addin menu in

RStudio, via googleCloudRunner::cr_deploy_gadget() or

assigned to a hotkey (I use CTRL+SHIFT+D).

This sets up a Shiny UI to help smooth out deployments as pictured:

Build and schedule an R script (custom)

If you want to customise deployments, then the steps covered by

cr_deploy_r() are covered below.

To schedule an R script the steps are:

- Create your R script

- Select or build an R enabled Dockerfile to run the R code

- [optional] Build the R image

- Select a source location that the R code will run upon

- Schedule calling the Docker image using Cloud Scheduler

1. Create your R script

The R script can hold anything, but make sure its is self contained with auth files, data files etc. All paths should be relative to the script and available in the source you choose to build with (e.g. GCS or git repo) or within the Docker image executing R.

Uploading auth files within Dockerfiles is not recommended security

wise. The recommend way to download auth files is to use Secret Manager,

which is available as a build step macro via

cr_buildstep_secret()

2. Bundle the R script with a Dockerfile

You may only need vanilla r or tidyverse, in which case select the presets “rocker/r-ver” or “rocker/verse”.

You can also create your own Docker image - point it at the folder

with your script and a Dockerfile (perhaps created with

cr_buildstep_docker())

3. Build the Docker image on Cloud Build

Once you have your R script and Dockerfile in the same folder, you need to build the image.

This can be automated via the cr_deploy_docker()

function supplying the folder containing the Dockerfile:

cr_deploy_docker("my-scripts/", "gcr.io/your-project/your-name")Once the image is built successfully, you do not need to build it again for the scheduled calls - you could setup doing that only if the R code changes.

4. Make the build and optional source

You may want your R code to operate on data in Google Cloud Storage or a git repo. Specify that source in your build, then make the build object:

GitHub Source

New from version 0.5 you can run schedules via triggered build triggers. If using build triggers then you can specify the source in the build trigger itself rather than within the build. This is a bit more flexible since you can then simply commit to the GitHub repo to change the running code and/or data for the next time the schedule runs.

Assuming you have a scheduled pubsub setup then you configure the buildtrigger to run each time that pubsub is called like the example below:

schedule_me <- cr_schedule_pubsub(topic)

cr_schedule("target_pubsub_schedule",

schedule = "15 4 * * *",

pubsubTarget = schedule_me)

# no regex allowed for sourceToBuild repo objects

gh <- cr_buildtrigger_repo("MarkEdmondson1234/googleCloudRunner", branch = "master")

pubsub_sub <- cr_buildtrigger_pubsub(topic)

cr_buildtrigger("cloudbuild_targets.yaml",

name = "targets-scheduled-demo",

sourceToBuild = gh,

trigger = pubsub_sub)Alternate code sources

There are also some other legacy ways to include code/data sources

within your builds, which you may still want to do if you are not

scheduling a build trigger but a build directly using

cr_schedule_http(). It is recommended though to use the

PubSub topics for easier debugging and transparency.

Repo Source

This is if you have your code files within Cloud Source repositories - this can include mirrors from other git providers such as GitHub - see setting up git.

schedule_me <- cr_build_yaml(

steps = cr_buildstep("your-r-image",

"R -e my_r_script.R",

prefix="gcr.io/your-project")

)

# maybe you want a repo source

repo_source <- cr_build_source(

RepoSource("MarkEdmondson1234/googleCloudRunner",

branchName="master"))

my_build <- cr_build_make(schedule_me, source = repo_source)Cloud Storage Source

This keeps your R code source in a Cloud Storage bucket.

The first method uses ?cr_build_upload_gcs to create a

tar.gz that has zipped files in a folder that you upload:

schedule_me <- cr_build_yaml(

steps = cr_buildstep("your-r-image",

"R -e my_r_script.R",

prefix="gcr.io/your-project")

)

# upload a tar.gz of the files to use as a source:

gcs_source <- cr_build_upload_gcs("local_folder_with_r_script")

my_build <- cr_build_make(schedule_me, source = gcs_source)Download files in an initial buildstep

When only a few files, it may be easiest to include downloading the R file from your bucket first into the /workspace/ via a buildstep using gsutil, not using source at all:

schedule_me <- cr_build_yaml(

steps = c(

cr_buildstep(

id = "download R file",

name = "gsutil",

args = c("cp",

"gs://mark-edmondson-public-read/my_r_script.R",

"/workspace/my_r_script.R")

),

cr_buildstep("your-r-image",

"R -e /workspace/my_r_script.R",

prefix="gcr.io/your-project")

)

)

my_build <- cr_build_make(schedule_me)Download files via git

Another alternative is to use git within the buildsteps to clone from a repo - these can be private git repos if you have uploaded your git SSH key to Secret Manager:

cr_build_yaml(

steps = c(

cr_buildstep_gitsetup("github-ssh"),

cr_buildstep_git(c("clone",

"git@github.com:github_name/repo_name")),

cr_buildstep_r("list.files()")

)

)Schedule calling the Docker image using Cloud Scheduler

Once you have a working build, schedule that build object by passing

it to the cr_schedule_http() function, which constructs the

Cloud Build API call for Cloud Scheduler to call at its scheduled

times.

# create a scheduler http endpoint that will trigger your build

cloud_build_target <- cr_schedule_http(my_build)

# schedule it

cr_schedule("15 5 * * *", name="scheduled_r",

httpTarget = cloud_build_target)You can automate updates to the script and/or Docker container or

schedule separately, by redoing the relevant step above, or using

cr_buildtrigger() to automate deployments.