Cloud Run is a service that lets you

deploy container images without worrying about the underlying servers or

infrastructure. It is called with cr_run(), or you can

automate a deployment via cr_deploy_run().

If you would like to have your R code react in realtime to events such as HTTP or Pub/Sub events, such as a website or API endpoint, Cloud Run is a good fit.

If you want to run scripts that can be triggered one time, setup to trigger on GitHub events or pub/sub, or scheduled using Cloud Scheduler then Cloud Build is more suited to your use case.

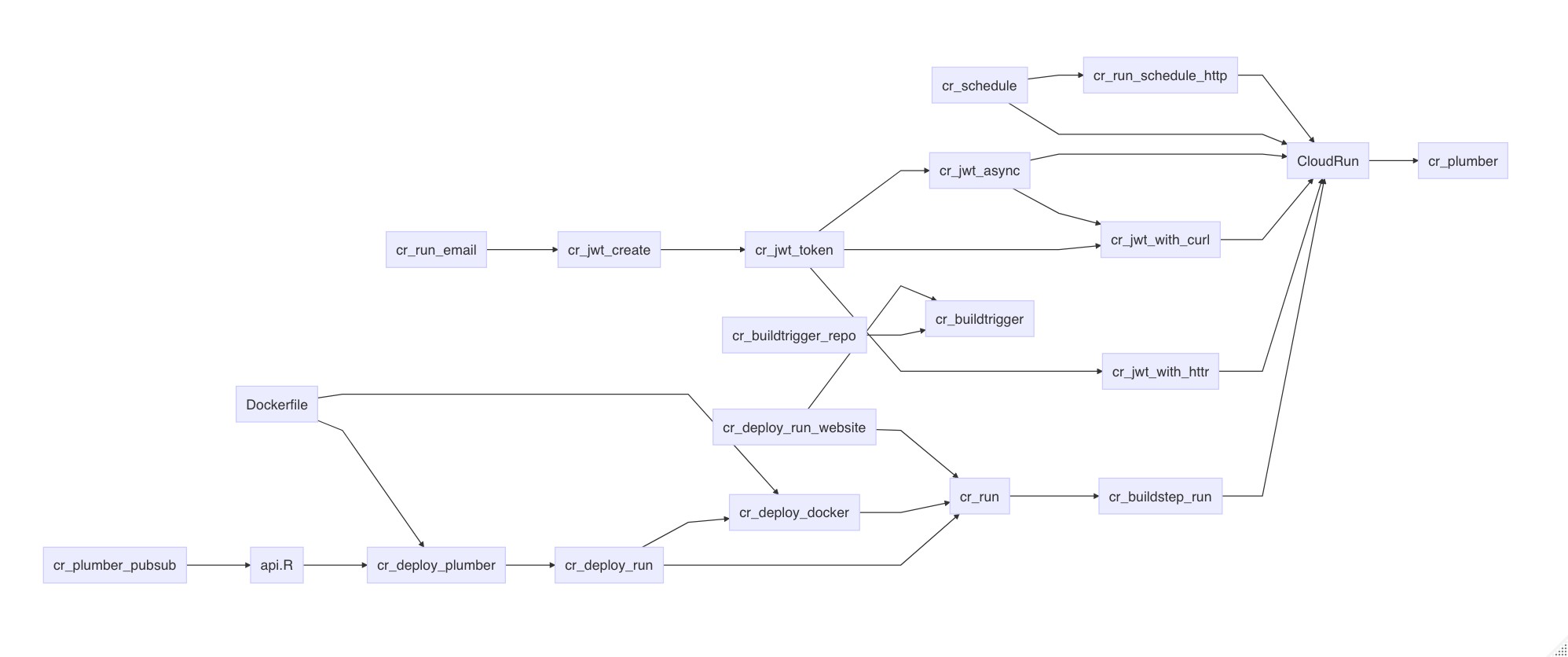

An overview of the functionality available is in this diagram:

Quickstart - plumber API

- Make an R API via plumber

that contains entry file api.R. You can use the demo example in

system.file("example", package="googleCloudRunner")if you like, which is reproduced below:

#' @get /

#' @html

function(){

"<html><h1>It works!</h1></html>"

}

#' @get /hello

#' @html

function(){

"<html><h1>hello world</h1></html>"

}

#' Echo the parameter that was sent in

#' @param msg The message to echo back.

#' @get /echo

function(msg=""){

list(msg = paste0("The message is: '", msg, "'"))

}

#' Plot out data from the iris dataset

#' @param spec If provided, filter the data to only this species (e.g. 'setosa')

#' @get /plot

#' @png

function(spec){

myData <- iris

title <- "All Species"

# Filter if the species was specified

if (!missing(spec)){

title <- paste0("Only the '", spec, "' Species")

myData <- subset(iris, Species == spec)

}

plot(myData$Sepal.Length, myData$Petal.Length,

main=title, xlab="Sepal Length", ylab="Petal Length")

}- Create a Dockerfile for the API - see bottom of page for how to do this automatically with https://o2r.info/containerit/

The example folder has this Dockerfile:

FROM gcr.io/gcer-public/googlecloudrunner:master

COPY ["./", "./"]

ENTRYPOINT ["R", "-e", "pr <- plumber::plumb(commandArgs()[4]); pr$run(host='0.0.0.0', port=as.numeric(Sys.getenv('PORT')))"]

CMD ["api.R"]- Deploy via the

cr_deploy_plumber()function:

library(googleCloudRunner)

my_plumber_folder <- system.file("example", package="googleCloudRunner")

cr <- cr_deploy_plumber(my_plumber_folder)

#2019-11-12 10:34:29 -- File size detected as 903 bytes

#2019-11-12 10:34:31> Cloud Build started - logs:

#https://console.cloud.google.com/gcr/builds/40343fd4-6981-41c3-98c8-f5973c3de386?project=1080525199262

#Waiting for build to finish:

# |===============||

#Build finished

#2019-11-12 10:35:43> Deployed to Cloud Run at:

#https://cloudrunnertest2-ewjogewawq-ew.a.run.app

#==CloudRunService==

#name: cloudrunnertest2

#location: europe-west1

#lastModifier: 1080525199262@cloudbuild.gserviceaccount.com

#containers: gcr.io/mark-edmondson-gde/cloudrunnertest2

#creationTimestamp: 2019-11-12T10:35:19.993128Z

#observedGeneration: 1

#url: https://cloudrunnertest2-ewjogewawq-ew.a.run.app - Enjoy your API

RStudio Gadget - deploy plumber script

If you are using RStudio, installing the library will enable an RStudio Addin that can be called after you have setup the library as per the setup page.

It includes a Shiny gadget that you can call via the Addin menu in

RStudio, via googleCloudRunner::cr_deploy_gadget() or

assigned to a hotkey (I use CTRL+SHIFT+D).

This sets up a Shiny UI to help smooth out deployments as pictured:

What did it do?

Deployment via cr_deploy_plumber() automated these

steps:

- Uploads the Dockerfile and your api.R file to your Google Cloud Storage bucket

- Creates a Cloud Build job for building the files uploaded to the GCS bucket, and pushes the Docker images to Google Container Registry

- Deploys that container to Cloud Run

It will launch a browser showing the build on Cloud Build, or you can

wait for progress in your local R session. Upon successfully deployment

it gives you a CloudRunService object with details of the

deployment.

All the above stages can be customised for your own purposes, using the functions explained below.

Customising Cloud Run deployments

The Cloud Run API is not called directly when deploying - instead a

Cloud Build is created for deployment. cr_run creates a

cloudbuild that makes a cloud build including

cr_buildstep_run().

You can build the deployment yourself by using

cr_buildstep_run() within your own cr_build().

This may cover things like downloading encrypted resources necessary for

a build, running other code etc.

If you have an existing image you want to deploy on Cloud Run

(usually one that serves up HTTP content, such as a website or via

library(plumber)) then you only need to supply that image

to deploy:

cr_run("gcr.io/my-project/my-image")Cloud Run needs specific ports available in your container, so you may want to consult the documentation if you do - in particular look at the container contract which specifies what the Docker container requirements are.

If you want to do the common use case of building the container first

before deployment to Cloud Run, you can do so by using the helper

cr_deploy_docker():

cr_deploy_docker("my-image")

cr_run("gcr.io/my-project/my-image")If you want this in the same Cloud Build, then this is available via

the buildsteps cr_buildstep_docker() and

cr_buildstep_run().

Cloud Run deployment functions

cr_deploy_run()wraps the steps described above to build the Dockerfile, and deploys it to Cloud Run, setting authentication as needed.cr_deploy_plumber()adds checks for plumber API files. You need to make sure you have anapi.Rfile in the deployment folder you provide, that will hold plumber code.cr_deploy_html()adds steps to deploy a nginx web server ready to serve the HTML files you supply.

Regions

You can deploy to any GCP region that supports Cloud Run. A list is

maintained and updated from the latest package build that you can see in

googleCloudRunner::cr_regions

Pub/Sub

Pub/Sub is a messaging service used throughout GCP to transfer messages to services. For instance, Cloud Storage objects being created, logging filter conditions, BigQuery table updates. It is useful to be able to react to these messages with R code which Cloud Run + Plumber enables by providing a HTTP endpoint for push Pub/Sub subscriptions.

A helper function is included with googleCloudRunner

that decodes the standard pub/sub message into an R object. To use place

the below function into your plumber script and deploy:

pub <- function(x){paste("Echo:", x)}

#' Recieve pub/sub message

#' @post /pubsub

#' @param message a pub/sub message

function(message=NULL){

googleCloudRunner::cr_plumber_pubsub(message, pub)

}The pub() function can be any R code you like, so you

can change it to trigger an analysis or other R tasks. The data within a

pubsub message can also be used as a parameter to your code, such as an

object name or timestamp of when the event fired. An example if also in

the use

case section of the website

Creating a Dockerfile with containerit

containerit is not yet on CRAN so can’t be packaged with

the CRAN version of googleCloudRunner but its a useful

package to work with since it auto-creates Dockerfiles from R files.

To install it use

remotes::install_github("o2r-project/containerit")

See containerits

website for more details on how to use it.

An example of using it to help create plumber Dockerfiles is below:

library(containerit)

cr_dockerfile_plumber <- function(deploy_folder, ...){

docker <- dockerfile(

deploy_folder,

image = "trestletech/plumber",

offline = FALSE,

cmd = Cmd("api.R"),

maintainer = NULL,

copy = list("./"),

container_workdir = NULL,

entrypoint = Entrypoint("R",

params = list(

"-e",

"pr <- plumber::plumb(commandArgs()[4]); pr$run(host='0.0.0.0', port=as.numeric(Sys.getenv('PORT')))")

),

filter_baseimage_pkgs = FALSE,

...))

write_to <- file.path(deploy_folder, "Dockerfile")

write(docker, file = write_to)

assert_that(

is.readable(write_to)

)

message("Written Dockerfile to ", write_to, level = 3)

print(docker)

docker

}Deploying Shiny to Cloud Run

Due to Shiny’s stateful nature, Cloud Run is not a perfect fit for Shiny but it can be configured to run Shiny apps that scale to 0 (e.g. no cost) up to 80 connections at once to one instance. The one instance is necessary to avoid requests being sent to another Shiny container instance and losing the user session. Shiny also needs to have certain websocket features disabled, and there is a 15min session timeout.

This means you can’t scale to a billion like other Cloud Run apps, and need to keep an eye on the load of the Shiny app (which will depend on your code) to see if 80 connections are too much for the CPU and Memory you assign to the Cloud Run instance. However this should still be a good fit for a lot of data science applications.

A minimal working example has been created by @randy3k here: https://github.com/randy3k/shiny-cloudrun-demo which can be deployed to Cloud Run via a fork here:

library(googleCloudRunner)

# a repo with the Dockerfile template

repo <- cr_buildtrigger_repo("MarkEdmondson1234/shiny-cloudrun-demo")

# deploy a cloud build trigger so each commit build the image

cr_deploy_docker_trigger(

repo,

image = "shiny-cloudrun"

)

# deploy to Cloud Run

cr_run(sprintf("gcr.io/%s/shiny-cloudrun:latest",cr_project_get()),

name = "shiny-cloudrun",

concurrency = 80,

max_instances = 1)If you need to change the memory for the one instance, you can use

cpu and memory arguments in the

cr_buildstep_run() and subsequent functions such as

cr_run(). The maximum allowed is 2 CPUs and 2 gibibytes

(2Gi):

# deploy to Cloud Run with 2GBs of RAM per instance and 2 CPUs

cr_run(sprintf("gcr.io/%s/shiny-cloudrun:latest",cr_project_get()),

name = "shiny-cloudrun",

concurrency = 80,

max_instances = 1,

cpu = 2,

memory = "2Gi")Or if you have a local Shiny app in shiny_cloudrun/ with

the appropriate Dockerfile and Shiny configuration, the full Docker

build and deployment pipeline can be carried out with:

# deploy the app version from this folder

cr_deploy_run("shiny_cloudrun/",

remote = "shiny-cloudrun2",

tag = c("latest","$BUILD_ID"),

max_instances = 1, # required for shiny

concurrency = 80)Shiny app on Cloud Run with googleAuthR authentication

If you want to have authentication options for the user as they visit

the app on Cloud Run, the below is a working example that is deployed

here:

https://shiny-cloudrun-sc-ewjogewawq-ew.a.run.app/

Folder:

|

|- app.R

|- Dockerfile

|- client.json

|- shiny-customized.configapp.R

library(shiny)

library(searchConsoleR)

library(googleAuthR)

gar_set_client(web_json = "client.json",

scopes = "https://www.googleapis.com/auth/webmasters")

ui <- fluidPage(

googleAuth_jsUI('auth', login_text = 'Login to Google'),

tableOutput("sc_accounts")

)

server <- function(input, output, session) {

auth <- callModule(googleAuth_js, "auth")

sc_accounts <- reactive({

req(auth())

with_shiny(

list_websites,

shiny_access_token = auth()

)

})

output$sc_accounts <- renderTable({

sc_accounts()

})

}

shinyApp(ui = ui, server = server)Dockerfile

FROM rocker/shiny

# install R package dependencies

RUN apt-get update && apt-get install -y \

libcurl4-openssl-dev libssl-dev \

## clean up

&& apt-get clean \

&& rm -rf /var/lib/apt/lists/ \

&& rm -rf /tmp/downloaded_packages/ /tmp/*.rds

## Install packages from CRAN

RUN install2.r --error \

-r 'http://cran.rstudio.com' \

googleAuthR searchConsoleR

COPY shiny-customized.config /etc/shiny-server/shiny-server.conf

COPY client.json /srv/shiny-server/client.json

COPY app.R /srv/shiny-server/app.R

EXPOSE 8080

USER shiny

# avoid s6 initialization

# see https://github.com/rocker-org/shiny/issues/79

CMD ["/usr/bin/shiny-server"]client.id and GCP setup

The client.json was a web client json from my project:

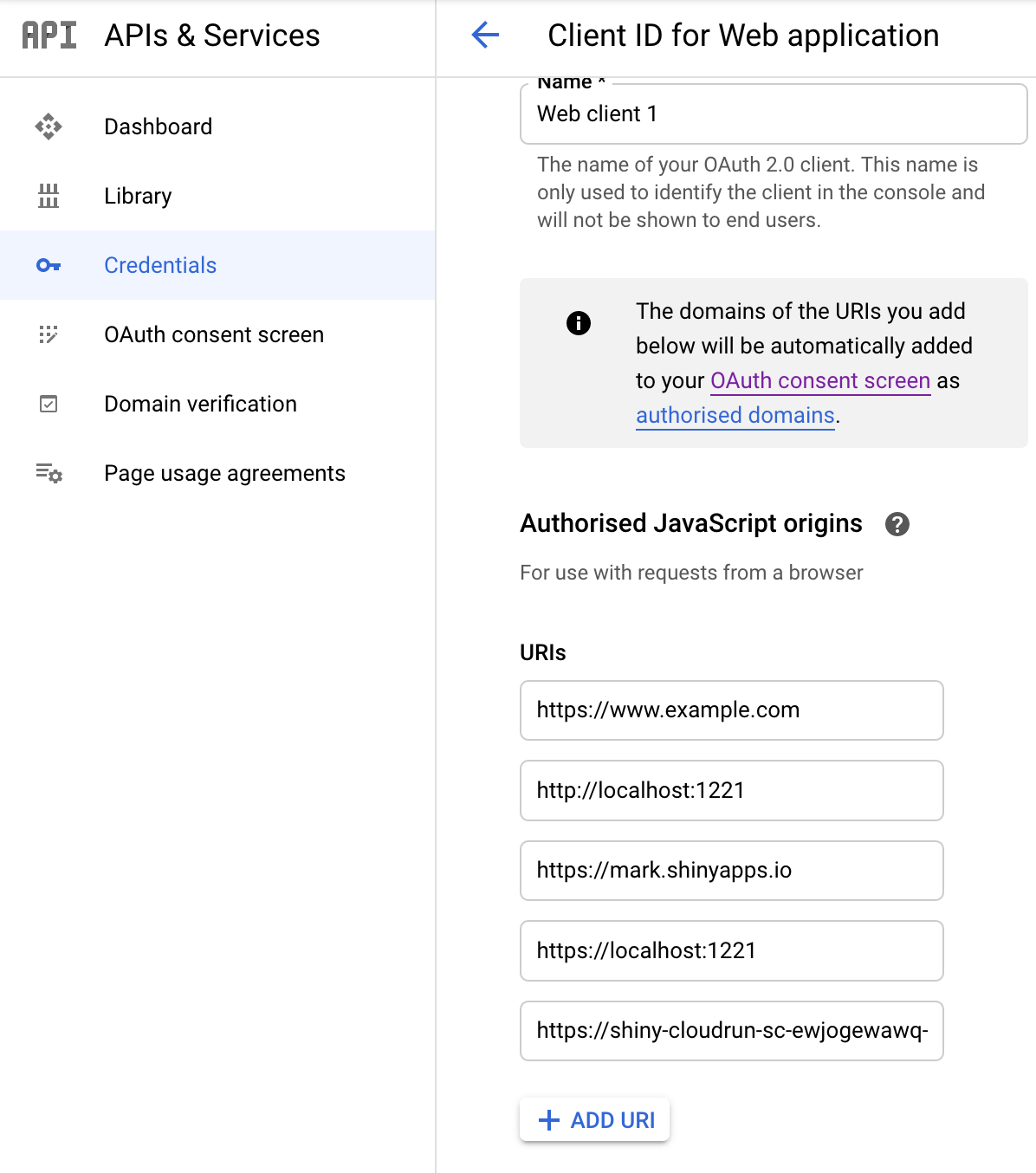

{"web":{"client_id":"10XXX","project_id":"XXXX","auth_uri":"https://accounts.google.com/o/oauth2/auth","token_uri":"https://accounts.google.com/o/oauth2/token","auth_provider_x509_cert_url":"https://www.googleapis.com/oauth2/v1/certs","client_secret":"XXXXX","redirect_uris":["http://localhost"],"javascript_origins":["https://www.example.com","http://localhost:1221"]}}You need to add the domain of where the Cloud Run is running in the JavaScript origins within the GCP console, that you get after deploying the app. (GCP console > APIs & Services > Crdentials > Click on the Web Client ID you are using > Add URL to Authorised JavaScript origins).

In the example case this is

https://shiny-cloudrun-sc-ewjogewawq-ew.a.run.app/

shiny-customized.config

This is the configuration file for Shiny that will overwrite the default one - its main purpose is turning off the websocket functionality that is not supported on Cloud Run

disable_protocols websocket xdr-streaming xhr-streaming iframe-eventsource iframe-htmlfile xdr-polling iframe-xhr-polling;

run_as shiny;

server {

listen 8080;

location / {

site_dir /srv/shiny-server;

log_dir /var/log/shiny-server;

directory_index off;

}

}Deploying

You can then deploy similar to the example above, which will build the Dockerfile and then deploy it to Cloud Run.

# deploy the app version from this folder

cr_deploy_run("shiny_cloudrun/app/",

remote = "shiny-cloudrun-sc",

tag = c("latest","$BUILD_ID"),

max_instances = 1, # required for shiny

concurrency = 80)Accessing authenticated Cloud Run apps

When deploying via cr_run() or otherwise you have the

option to only allowed authenticated requests to your app by setting the

argument allowUnauthenticated=FALSE. This lets you deploy

private docker containers and only allow requests from users or services

that have the correct JSON Web Token (JWT) in their headers. Note this

is distinct from OAuth2 access, which will not work.

The accessing of those services are documented here and can be reached by any service that can make HTTP requests and has the appropriate authorisation via a service account key.

To enable easier access from R the cr_jwt_create()

functions let you use your own local service account key to generate

appropriate JWTs, so as to be able to access the private Cloud Run

URL.

A demo is below, assuming you have already deployed the private Cloud Run service:

# The private authenticated access only Cloud Run service

the_url <- "https://authenticated-cloudrun-ewjogewawq-ew.a.run.app/"

# creating the JWT and token from your service account key used for googleCloudRunner

jwt <- cr_jwt_create(the_url, service_json = Sys.getenv("GCE_AUTH_FILE"))

token <- cr_jwt_token(jwt, the_url)

# call Cloud Run app using token with any httr verb

library(httr)

res <- cr_jwt_with(GET("https://authenticated-cloudrun-ewjogewawq-ew.a.run.app/hello"),

token)

content(res)Scheduling Cloud Run applications

Via Cloud Scheduler you can set up a scheduled hit of your HTTP endpoints, via GET, POST or any other methods you have coded into your app.

# get your previously deployed Cloud Run app

my_app <- cr_run_get("your-cloud-run")

# add any URL parameters your app needs etc.

endpoint <- paste0(my_app$status$url, "?msg=blah")

# make the HTTP target

run_me <- HttpTarget(uri = endpoint, httpMethod = "GET")

cr_schedule("cloud-run-scheduled", schedule = "4 16 * * *", httpTarget = run_me)Private Cloud Run service scheduling

cr_run_schedule_http() will help you create the HTTP

endpoint for you to pass to cr_schedule() that will work

with Cloud Run apps with allowUnauthenticated = FALSE - it

will by default create a service email for invoking your app called

{your-app}-cloudrun-invoker@{your-project}.iam.gserviceaccount.com

# deploy a non-public app

cr_deploy_run("your-cloud-run", allowUnauthenticated = TRUE)

# get your previously deployed Cloud Run app

my_app <- cr_run_get("your-cloud-run")

# add any URL parameters your app needs etc.

endpoint <- paste0(my_app$status$url, "?msg=blah")

# generate the service email invoker that was created upon deployment

# "your-cloud-run-cloudrun-invoke@your-project.iam.gserviceaccount.com"

email <- cr_run_email("your-cloud-run")

# make the HTTP target for authenticated access only

run_me <- cr_run_schedule_http(endpoint, email = email, http_method = "GET")

# schedule the call to the endpoint of your Cloud run app

cr_schedule("cloud-run-scheduled", schedule = "4 16 * * *", httpTarget = run_me)Deploying from another Google Cloud project

By default the build will only allow deployments from the same Google Cloud Project - if you want to deploy container images from other projects you need to give the Cloud Run Service Agent for the Cloud Run project access to the source project. See this Google help file.

This is available via the cr_setup_service() function,

using the role lookup

cr_setup_role_lookup("run_agent"):

cr_setup_service("service-{project-number}@serverless-robot-prod.iam.gserviceaccount.com"

roles = cr_setup_role_lookup("run_agent"))